Unicode for Software Developers

Short history of Unicode

Unicode is a character-encoding standard maintained by the Unicode Consortium. It provides a universal foundation for processing, storing, and exchanging text in any language across modern software systems. Before Unicode existed, computers used a long chain of incompatible encodings: ASCII, then ISO-8859 variants, and earlier systems such as ITA2 (Baudot–Murray), ITA1, and even Morse code.

Short history of Unicode

Creation and founders

The Unicode Consortium was created in 1987 by engineers from Xerox and Apple, including Joe Becker, Lee Collins, and Mark Davis. Their goal was to design a single universal character set that could replace the dozens of regional encodings used around the world.

Why Unicode was needed

The growth of global software made national encodings impossible to maintain. Each region used its own system—ISO-8859-1 for Western Europe, Windows-1250 for Central Europe, Shift-JIS for Japan, GB for China, KOI8-R for Russia, and many others. These encodings were incompatible: the same byte could represent different letters depending on the system, which made text exchange unreliable. Developers often had to build multiple “locale-specific” versions of the same software. Unicode eliminated this fragmentation by defining one code point for every character—Latin, Cyrillic, Arabic, Chinese ideographs, Korean Hangul, Japanese kana, and eventually emojis. With one unified set, global software finally became practical.

Draft proposal of Unicode88 — August, 1988

Joseph D. Becker (Xerox) described the core problem clearly: people need to compute in their native languages, not just in English. ASCII was too small for that. Future systems needed a single multilingual standard covering Latin alphabets, major non-Latin scripts, and thousands of CJK ideographs. https://www.unicode.org/history/unicode88.pdf

ISO 10646 drafts — 1988–1990

ISO proposed a 31-bit design, not aligned with ASCII, and driven by national-body processes. The approach was bureaucratic and technically difficult to implement on 1980s hardware.

Unicode version 1 — October, 1991

Unicode initially rejected ISO’s proposal. The Consortium believed a 16-bit space was enough to encode all necessary characters, and keeping ASCII compatibility was essential. ISO disagreed, and Version 1.0 received no international approval.

Unicode Version 1.1 and ISO/IEC 10646-1:1993 — June, 1993

A compromise was reached: ISO adopted Unicode’s code charts, and Unicode adopted ISO’s plane structure. Both standards were synchronized to define the same characters at the same code points, with guaranteed ASCII compatibility.

Unicode Version 2 — July, 1996

Real-world requirements showed that 16 bits were not enough. China and Japan requested thousands of additional ideographs used in legal names and government documents. China later required all software sold in the country to support its complete repertoire. This pushed Unicode and ISO to expand beyond the original 65,536-code-point limit. Unicode 2.0 introduced surrogate pairs and expanded the design to a 21-bit code space. UCS-2 became UTF-16, enabling characters outside the Basic Multilingual Plane. This expansion was strongly influenced by Chinese national standards such as GB 18030.

Relationship between Unicode and ISO

Unicode and ISO now work jointly, but they began with different roles. ISO, as an international standards body, has political authority—governments depend on it for regulation and language policy. Unicode, founded by private-sector engineers, moved faster and focused on solving technical problems. Over time both organizations aligned: ISO provides global legitimacy, while Unicode defines the technical rules—encoding behavior, normalization, bidirectional text, and emoji specifications. Today they share a synchronized repertoire.

Why ASCII tables still matter?

Unicode kept ASCII as its foundation. The first 128 code points are identical to ASCII, so modern systems inherently support ASCII. Developers still consult ASCII tables because many low-level protocols—HTTP, email, URLs, shells, programming languages—continue to rely on ASCII byte values. Unicode didn’t replace ASCII; it extended it.

Are modern languages affected by Unicode?

Yes. Each language integrates Unicode differently based on its historical string model.

Swift has been strongly influenced by Unicode, especially since Swift 5 switched String to native UTF-8 storage. It treats Unicode correctly at a high level, supporting grapheme clusters, ZWJ emoji, flags, combining characters, and normalization. Indexing operates on extended grapheme clusters rather than UTF-16 units, although bridging to NSString reintroduces UTF-16 semantics when older APIs are involved. As a result, Swift is one of the most Unicode-correct mainstream languages.

Objective-C, through NSString and CFString, uses UTF-16 internally. Its length and indexing operations work at the level of UTF-16 code units rather than Unicode scalars or grapheme clusters. Modern Unicode constructs such as surrogate pairs, flags, ZWJ sequences, and emoji with modifiers break the assumptions behind its basic APIs, and it provides no native way to iterate grapheme clusters without relying on higher-level functions. Objective-C can represent the full Unicode repertoire, but it cannot interpret many modern features correctly at the raw API level.

Java is similarly affected because it also stores String as UTF-16, inheriting the same limitations as Objective-C. Its length() method counts 16-bit code units rather than characters, and any character above the Basic Multilingual Plane requires explicit surrogate handling. Later versions added improved Unicode support in regular expressions and utility classes, but the underlying string model remains firmly tied to the older UTF-16/UCS-2 style. In practice, Java supports Unicode but remains constrained by decisions made in the 1990s.

Kotlin, when running on the JVM, inherits Java’s UTF-16 representation. Its String.length property counts UTF-16 units, and surrogate pairs, emojis, and ZWJ sequences behave exactly as they do in Java. Even Kotlin Multiplatform aligns with UTF-16 for compatibility reasons. As a result, Kotlin’s Unicode behavior is limited by the JVM’s architecture and is not as correct or flexible as more modern approaches like Swift’s.

Which encoding do modern operating system kernels actually use for filenames, system calls, and process arguments? Do macOS, Linux, and Windows rely on UTF-8, UTF-16, or something else internally?

macOS follows UNIX conventions and uses UTF-8 for almost all system-level interfaces exposed to userland. The kernel itself treats filenames as byte sequences but the entire Apple ecosystem assumes UTF-8 as the canonical encoding. Linux kernels do not enforce Unicode at all: they store filenames and arguments as raw bytes and leave interpretation to userland, which today standardizes on UTF-8 through glibc and desktop environments. Windows is the outlier: the NT kernel and the Win32 API are built around UTF-16, and APIs that accept UTF-8 are thin compatibility layers added later. As a result, the behavior of text at the OS boundary still differs significantly across platforms.

Are UI frameworks on iOS and Android aware of modern Unicode features such as emojis, ZWJ sequences, flag pairs, and other complex grapheme clusters?

Yes, but the awareness comes from the text-rendering stack, not the UI frameworks themselves. On iOS, both UIKit and SwiftUI rely on Core Text, Core Graphics, and Apple’s system fonts. These layers fully support emojis, ZWJ sequences, flag pairs, skin-tone modifiers, and other grapheme clusters defined by modern Unicode. On Android, both the View system and Jetpack Compose depend on HarfBuzz, Minikin, and system fonts, which implement the same modern Unicode rules. The frameworks do not parse or interpret complex characters on their own; they delegate rendering, shaping, and cluster boundaries to lower-level text engines that track the evolving Unicode standard.

Why is there a difference between how Foundation handles Unicode features and how UI frameworks on iOS and Android display them? Shouldn’t both layers support the same modern Unicode behavior?

Foundation is an older API layer whose string behavior is shaped by historical constraints such as UTF-16 storage, legacy Objective-C design, and APIs that operate on code units rather than grapheme clusters. As a result, certain modern Unicode constructs—ZWJ sequences, complex emojis, multi-scalar clusters—cannot be interpreted correctly by its low-level methods. UI frameworks, on the other hand, do not parse Unicode themselves. They delegate all shaping, rendering, and grapheme-boundary decisions to system text engines like Core Text and HarfBuzz, which track the latest Unicode specifications. This separation means the rendering layer keeps up with modern Unicode evolution, while older string APIs in Foundation or the JVM may lag behind because they were designed around simpler character models.

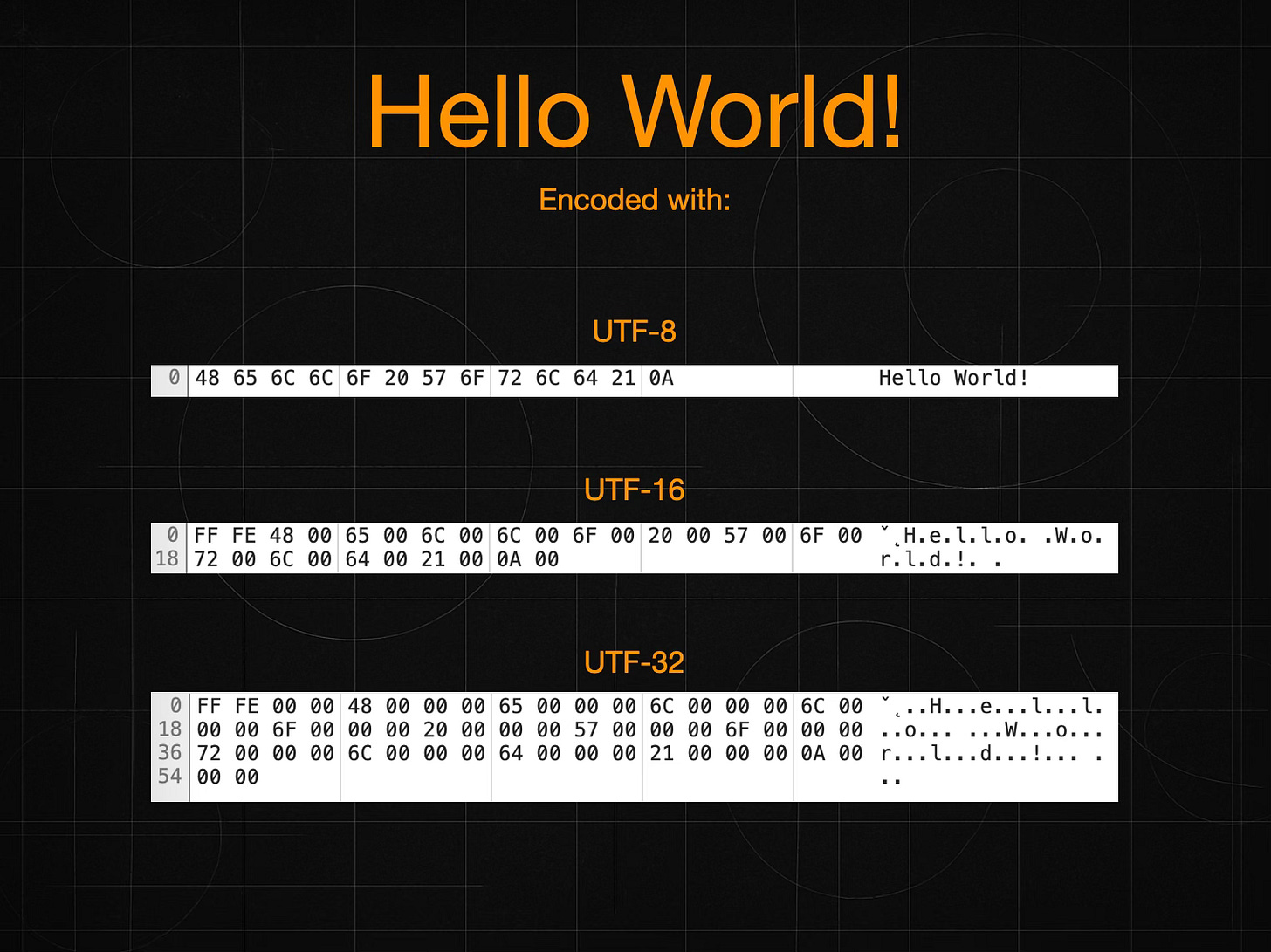

What is the difference between UCS-2, UCS-4, UTF-8, UTF-16, and UTF-32?

UCS-2 was the original fixed-width encoding of Unicode 1.0 in 1991. It stored every character in exactly sixteen bits and reflected the early belief that 65,536 positions would be sufficient for all writing systems. Once the standard began to incorporate larger repertoires—especially after CJK countries highlighted the vast number of ideographs in real use—it became clear that UCS-2 could not scale beyond the Basic Multilingual Plane, and it became obsolete once Unicode adopted surrogate pairs.

UCS-4 emerged from the early ISO/IEC 10646 work between 1989 and 1993. ISO originally proposed a massive 31-bit code space. The final ISO 10646-1:1993 edition reduced this to a fixed 32-bit representation, still far larger than practical Unicode needs. After Unicode and ISO unified their repertoires, UCS-4 remained simply a 32-bit encoding form capable of representing the same 21-bit Unicode range, mainly used in specialized systems where simplicity mattered more than memory.

UTF-8 was invented in 1992 by Ken Thompson and Rob Pike and became part of Unicode 1.1 in 1993. It stores characters in a variable number of bytes from one to four and keeps ASCII as a one-byte identity mapping. UTF-8 quickly became the dominant encoding on Unix systems, the web, and almost all modern text protocols because of its compactness and compatibility with existing ASCII-based tools.

UTF-16 was introduced with Unicode 2.0 in 1996. It replaced UCS-2 by extending the original 16-bit model with surrogate pairs that represent characters above U+FFFF. This allowed Unicode to expand to a 21-bit code space without breaking software built around 16-bit code units. UTF-16 became the internal string representation for Windows, Java, .NET, and Apple’s NSString/CFString.

UTF-32 appeared in the late 1990s as a simplified version of UCS-4 restricted to the actual Unicode range. It stores every code point in exactly four bytes. Although easy to process, it is space-inefficient, so it is rarely used for storage and instead appears mainly in internal structures of compilers, font engines, or low-level text tools.

In summary, UCS-2 and UCS-4 were early fixed-width forms tied to the initial Unicode and ISO designs; UTF-8, UTF-16, and UTF-32 are modern encoding forms introduced throughout the 1990s to reconcile practicality, compatibility, and the need for a larger code space as Unicode matured.

Which Unicode encoding is the most widely used today, and why?

The most widely used Unicode encoding today is UTF-8, by a very large margin. It dominates the modern computing world because it preserves ASCII as a one-byte identity mapping, works smoothly with legacy Unix tools, and compresses Western languages extremely well. Almost all major platforms and technologies—Linux, macOS, iOS, Android, web browsers, servers, JSON, HTML, XML, email, and modern programming languages—standardize on UTF-8 for storage, communication, and file formats. Large-scale measurements confirm this dominance: well over 95% of all web pages are now encoded in UTF-8, and new protocols are designed around it by default.

UTF-16 and UTF-32 remain in use, but mainly as internal representations inside specific programming ecosystems. UTF-16 persists inside Java, Kotlin (JVM and Native), .NET, and Objective-C’s NSString, while UTF-32 appears occasionally in low-level text engines. However, when it comes to actual text exchange, files, services, and the internet as a whole, UTF-8 is by far the most popular encoding in the world today.

If UTF-8 is the most widely used encoding today, why did China push for Unicode to expand beyond the original 16-bit limit?

China’s push to expand Unicode beyond 16 bits had nothing to do with UTF-8’s popularity. It was about the size of the Unicode repertoire itself, not about how Unicode was encoded.

In the early 1990s, Unicode was based on a 16-bit model (UCS-2), which allowed only 65,536 code points. The designers believed this would cover all writing systems. Once China, Japan, and Korea examined the standard more closely, they discovered that the number of ideographs needed for real-world use—especially for names, publications, government documents, and variant forms—far exceeded that limit. Many of these characters are rarely used in ordinary text, but they are legally and administratively essential. If a standard cannot encode the characters in a citizen’s name, a place name, or an official document, it is not viable for national use.

China therefore required Unicode to support all ideographs in national standards, including less common ones, and eventually introduced GB 18030, which made full coverage mandatory for software sold in China. Because the BMP was too small for this expanded repertoire, Unicode had to move from the fixed 16-bit UCS-2 model to the 21-bit model introduced in Unicode 2.0. That transition brought surrogate pairs and UTF-16, enabling more than one million possible code points.

This expansion was a repertoire problem, not an encoding problem. UTF-8 could already encode far more than 65,536 characters from the start, so its popularity is unrelated. Unicode needed more than 16 bits because the world’s scripts genuinely required more characters—not because of how those characters were encoded.

When converting raw data into a string in Swift, Objective-C, Java, or Kotlin on Android, which text encoding should be used?

Across all these languages and platforms, the correct encoding to use when decoding external data is UTF-8. Modern systems—Apple platforms, Android, web services, JSON, XML, SQLite, network protocols, and almost every contemporary file format—standardize on UTF-8 for text interchange.

Swift internally stores strings as UTF-8, and Objective-C uses UTF-16 internally, but both expect incoming data to be UTF-8 and convert it automatically after decoding. Android’s Java and Kotlin also store strings as UTF-16, yet the entire Android ecosystem assumes UTF-8 for external data, from HTTP clients to JSON libraries and resource files.

You should only choose another encoding when dealing with legacy data created in old regional code pages (Windows-125x, ISO-8859-x, Shift-JIS, GBK, etc.). For modern applications and APIs, UTF-8 is the universal and correct choicefor decoding raw bytes into text.